Recientemente, hemos actualizado Tableau desde la versión 8.0 (32 Bits) a 8.1. 7 (64 Bits) junto con la conexión automática (Single Sign On) y la configuración de PostgreSQL (Acceso a la base de datos del sistema Tableau) . Después de la actualización exitosa de esta aplicación y la configuración de las características con nombre, me gustaría compartir mi experiencia, ya que pueden ser útiles en caso de que enfrente una situación similar.

Clariba recibe la certificación para SAP reconocida experiencia en business intelligence y SAP HANA

Inteligencia de negocios para las PYME - tiempo más rápido de valorar y más asequible que nunca

Dada la feroz competencia y las crecientes demandas de los clientes, las pequeñas y medianas empresas (PYMES) necesitan hoy invertir en tener capacidades de BI para mantenerse relevantes y satisfacer estas demandas. Sin embargo, con los escasos recursos disponibles, la alineación de los negocios y las TI es vital para asegurar el éxito, y las nuevas opciones para soluciones de BI alojadas que liberan el flujo de efectivo son opciones atractivas para las PYMES.

Las mejores victorias de SAP Business Warehouse (BW) y por qué Clariba puede implementar esta solución BW

¿Qué principales tendencias y predicciones de BI y analítica en 2014 hay que tener en cuenta?

¿Está preparada su organización para "After Big Data"?

A recent article in the Harvard Business Review by Thomas Davenport, professor and fellow at MIT, caught my attention. Davenport refers to the beginnings of a new era: “After Big Data” in his article Analytics 3.0. While organizations built around massive data volumes — such as Facebook, LinkedIn, eBay, Amazon and others — may be ready to move to the next wave of analytics, the full spectrum of big data capabilities is yet to be realized in the traditional organizations we work with on a daily basis.

¿Sabe qué es lo que impulsa su negocio?

Conocer su negocio y tomar decisiones más inteligentes con el fin de mantenerse al día con los riesgos cotidianos involucrados en el funcionamiento de su negocio es fundamental para sobrevivir en la economía global de hoy.

Tomar decisiones efectivas requiere información. Esta información debe ser precisa y actualizada, y en el nivel de detalle adecuado que necesita para poder avanzar a una velocidad óptima. Análisis de negocio clave es lo que le permite dibujar información de los datos que recopila en las diferentes partes de su negocio. Cuando usted entiende exactamente lo que está impulsando su negocio, de dónde vienen las nuevas oportunidades y dónde se cometieron errores, puede ser proactivo para maximizar los ingresos existentes y revelar áreas de expansión. Las mejores decisiones se pueden tomar cuando usted tiene más visibilidad en el conocimiento vital que viene de su propia compañía.

Tomar decisiones efectivas requiere información. Esta información debe ser precisa y actualizada, y en el nivel de detalle adecuado que necesita para poder avanzar a una velocidad óptima. Análisis de negocio clave es lo que le permite dibujar información de los datos que recopila en las diferentes partes de su negocio. Cuando usted entiende exactamente lo que está impulsando su negocio, de dónde vienen las nuevas oportunidades y dónde se cometieron errores, puede ser proactivo para maximizar los ingresos existentes y revelar áreas de expansión. Las mejores decisiones se pueden tomar cuando usted tiene más visibilidad en el conocimiento vital que viene de su propia compañía.

Las soluciones SAP Business Intelligence (BI) proporcionan una ventana a su empresa. Un panel de control, por ejemplo, es una visión general única, confiable y en tiempo real de su empresa. Le ofrece una visión rápida, en formatos atractivos visuales que son fáciles de entender. También tiene pruebas de "qué si" que le permiten medir el impacto de un cambio en particular en el negocio. Esto también se puede hacer disponible en dispositivos móviles, por lo que puede tomar decisiones informadas on-the-move. Cuando tiene información en la que puede confiar, puede actuar rápidamente y mantenerse a la vanguardia del juego.

With years of expertise in BI, Clariba has helped several companies to draw insight from their data. For example, Vodafone Turkey´s Marketing department sought our help to provide the Customer Value Management Team with a dynamic and user-friendly visualization and analysis tool for marketing campaigns. With the central dashboard we delivered, the marketing team was able to analyze existing campaigns and design outlines for new ones based on key success factors.

You can learn more about SAP BI solutions here, and you can also watch SAP BI Solutions videos on YouTube. Want to unlock this information on what drives your business forward? Contact us on info@clariba or leave a comment below, and discover how SAP BI Solutions can help you achieve it.

BI y Social Media - Una combinación poderosa (Parte 2: Facebook)

To continue with my Social Media series (read the previous blog here BI and Social Media – A Powerful Combination Part 1: Google Analytics), today I would like to talk about the biggest social network of them all: Facebook. In this blog post, I will explain different alternatives I have recently researched to extract and use information from Facebook to perform social media analytics with SAP BusinessObjects’ report and dashboard tools. In terms of the amount of useful information we can extract to perform analytics, I personally think that Twitter can be as good or even better than Facebook, however, it has around 400 million less users. Facebook still stands as the social network with the most users around the world - 901million at this moment - making it a mandatory reference in terms of social media analytics.

Antes de comenzar a hablar sobre detalles técnicos, lo primero que debe entender es que Facebook está fuertemente enfocado en la experiencia del usuario, las aplicaciones de entretenimiento, el intercambio de contenido, entre otros. Por lo tanto, la actividad del usuario es más dispersa y variable en comparación con la moda ordenada en tiempo real que Twitter nos brinda, lo cual es muy útil al construir tendencias y análisis cronológicos. Por lo tanto, asegúrese de lo que está buscando, manténgase enfocado en sus indicadores clave y asegúrese de buscar algo que sea significativo y medible.

API de Facebook relevantes para fines analíticos

The APIs (Application Programming Interface) that Facebook provides are largely directed at the development of applications for social networking and user entertainment. However, there are several APIs that can provide relevant information to establish Key Indicators that can later be used to run reports. As Facebook’s developer page1 states: “ We feel the best API solutions will be holistic cross API solutions.” Among the API’s that you will find most useful (labeled by Facebook as Marketing APIs), I can highlight the Graph API, the Pages API, the Ads API and the Insights API. In any case, I encourage you to take a look at Facebook pages and guides for developers, it will be worth your time:

Marketing Developer Program

Marketing Developer Resources (with mentions of the APIs above)

Facebook Marketing Solutions: http://www.facebook.com/marketing

Aplicaciones de terceros para extraer datos de Facebook

Solo encontré algunas aplicaciones de terceros para extraer datos de la API de Facebook que eran lo suficientemente completas como para garantizar un acceso confiable a los datos. A continuación se presentan algunas alternativas diseñadas para este requisito:

GA Data Grabber: This application has a module for the Facebook APIs, which costs 500USD a year. As in the case of Google Analytics, it has key benefits such as ease-of-use and flexibility to make queries. It may also be integrated with some tools from SAP BusinessObjects such as WebIntelligence, Data Integrator or Xcelsius dashboards through LiveOffice.2

Custom Application Development: It is the most popular option, as I already mentioned in my previous post about Google Analytics. The Facebook APIs admit access from common programming languages, allowing to record the results of the queries in text files that can be loaded into a database or incorporated directly into various tools of SAP BusinessObjects.

Implementation of a Web Spider: If the information requirements are more focused on the user’s interactions with your client’s Facebook webpage or any of its related Facebook applications, this method may provide complementary information to that which is available in the APIs. The information obtained by the web spider can be stored in files or database for further integration with SAP BusinessObjects tools. Typically, web spiders are developed in a common programming language, although there are some cases where you can buy an application developed by third parties, as the case of Mozenda.3

Final Thought

Como mencioné en mi publicación anterior, en el área de las redes sociales aparecen nuevas aplicaciones y tendencias a un ritmo agitado, se espera que ocurran muchos cambios, por lo que es solo cuestión de tiempo hasta que tengamos más y mejores opciones disponible. Le animo a que tenga curiosidad por el análisis de las redes sociales y sus redes más populares, porque en este momento esta es una mina de oro de información en crecimiento.

If you have any questions or anything to add to help improve this post, please feel free to leave your comments.

Índices B-tree vs Bitmap: Consecuencias de la Indexación - Estrategia de Indexación para su Parte de Oracle Data Warehouse 2

On my previous blog post B-tree vs Bitmap indexes - Indexing Strategy for your Oracle Data Warehouse I answered two questions related to Indexing: Which kind of indexes can we use and on which tables/fields we should use them. As I promised at the end of my blog, now it´s time to answer the third question: what are the consequences of indexing in terms of time (query time, index build time) and storage?

Consecuencias en términos de tiempo y almacenamiento

Para abordar este tema utilizaré una base de datos de prueba con un esquema en estrella muy simplificado: 1 tabla de hechos de los saldos de las cuentas de Libro mayor y 4 dimensiones - la fecha, la cuenta, la moneda y la rama banco).

Para dar una idea del tamaño de la tabla, Fact_General_Ledger tiene 4,5 millones de filas, Dim_Date 14 000, Dim_Account 3 000, Dim_Branch y Dim_Currency menos de 200.

Supongamos aquí que los usuarios pueden consultar los datos con filtro en la fecha, código de sucursal, código de moneda, código de cuenta y los niveles 3 de la jerarquía de balance (DIM_ACCOUNT.LVLx_BS). Suponemos que las descripciones no se utilizan en los filtros, sino sólo en los resultados.

Aquí está la consulta que usaremos como referencia:

Seleccionar

d.date_date,

a.account_code,

b.branch_code,

c.currency_code,

f.balance_num

de fact_general_ledger f

unirse a dim_account a en f.account_key = a.account_key

join dim_date d en f.date_key = d.date_key

unirse a dim_branch b en f.branch_key = b.branch_key

unirse a dim_currency c en f.currency_key = c.currency_key

Dónde

A.lvl3_bs = 'Depósitos con bancos' y

D.date_date = to_date ('16/01/2012', 'DD / MM / YYYY') y

b.branch_code = 1 y

C.currency_code = 'QAR' - Vivo en Qatar ;-)

Entonces, ¿cuáles son los resultados en términos de tiempo y almacenamiento?

Algunas de las conclusiones que podemos extraer de esta tabla son:

Using indexes pays off: queries are really faster (about 100 times), whatever the chosen index type is.

En cuanto al tiempo de consulta, el tipo de índice no parece importar realmente para las tablas que no son tan grandes. Probablemente cambiaría para una tabla de hechos con 10 mil millones de filas. No obstante, parece existir una ventaja para los índices de mapa de bits y, especialmente, para los índices de combinación de mapa de bits (consulte la columna de costes del plan de explicación).

El almacenamiento es claramente a favor de los índices de combinación de mapa de bits y mapa de bits

El tiempo de construcción del índice es claramente a favor de b-tree. No he probado el tiempo de actualización del índice, pero la teoría dice que es mucho más rápido para los índices b-tree también.

Ok, estoy convencido de usar índices. ¿Cómo puedo crear / mantener una?

La sintaxis para crear índices b-tree y bitmap es similar:

Crear índice de mapa de bits Index_Name ON Nombre de tabla (FieldName)

En el caso de los índices b-tree, simplemente quite la palabra "Bitmap" de la consulta anterior.

La sintaxis para los índices de combinación de mapa de bits es más larga pero sigue siendo fácil de entender:

Crear índice de mapa de bits ACCOUNT_CODE_BJ

on fact_general_ledger (dim_account.account_code)

de fact_general_ledger, dim_account

donde fact_general_ledger.account_key = dim_account.account_key

Tenga en cuenta que durante su ETL, es mejor dejar / deshabilitar sus índices de combinación de mapa de bits / mapa de bits y volver a crearlos / reconstruirlos después, en lugar de actualizarlos. Se supone que es más rápido (sin embargo no he hecho ninguna prueba).

La diferencia entre drop / re-create y disable / rebuild es que cuando se deshabilita un índice, se mantiene la definición. Así que necesitas una sola línea para reconstruirla en lugar de muchas líneas para la creación completa. Sin embargo, los tiempos de generación del índice serán similares.

Para eliminar un índice: "drop index INDEX_NAME"

Para deshabilitar un índice: "alter index INDEX_NAME inutilizable"

Para reconstruir un índice: "alter index INDEX_NAME rebuild"

Conclusión

The conclusion is clear: USE INDEXES! When properly used, they can really boost query response times. Think about using them in your ETL as well: making lookups can be much faster with indexes.

Si desea ir más lejos, sólo puedo recomendarle que lea la Guía de almacenamiento de datos de Oracle. Para conseguirlo, sólo busque en Internet (y no olvide especificar la versión de su base de datos - 10.2, 11.1, 11.2, etc.). Es un documento bastante interesante y completo.

B-rbol vs índices de mapa de bits - Estrategia de indexación para su Parte de Oracle Data Warehouse 1

Some time ago we’ve seen how to create and maintain Oracle materialized views in order to improve query performance. But while materialized views are a valuable part of our toolbox, they definitely shouldn’t be our first attempt at improving a query performance. In this post we’re going to talk about something you’ve already heard about and used, but we will take it to the next level: indexes.

¿Por qué utilizar índices? Porque sin ellos usted tiene que realizar una lectura completa en cada tabla. Basta pensar en una guía telefónica: está indexado por su nombre, así que si te pido que encuentres todos los números de teléfono de personas cuyo nombre es Larrouturou, puedes hacerlo en menos de un minuto. Sin embargo, si te pido que encuentres a todas las personas que tienen un número de teléfono que comienza con 66903, no tendrás más remedio que leer el directorio telefónico completo. Espero que no tengas nada más planeado para los próximos dos meses o así.

Lo mismo ocurre con las tablas de la base de datos: si buscas algo en una tabla de hechos de filas multimillonarias no indexadas, la consulta correspondiente tomará mucho tiempo (y el usuario final típico no quiere sentarse 5 minutos Delante de su computadora esperando un informe). Si utilizó índices, podría haber encontrado el resultado en menos de 5 (o 1, o 0.1) segundos.

Voy a responder a las siguientes tres preguntas: ¿Qué tipo de índices podemos utilizar? ¿En qué tablas / campos los utilizaremos? ¿Cuáles son las consecuencias en términos de tiempo (tiempo de consulta, tiempo de construcción de índice) y almacenamiento?

¿Qué tipo de índices podemos usar?

Oracle tiene muchos tipos de índices disponibles (IOT, Cluster, etc.), pero sólo hablaré de los tres principales utilizados en data warehouses.

Índices de los árboles B

B-tree indexes are mostly used on unique or near-unique columns. They keep a good performance during update/insert/delete operations, and therefore are well adapted to operational environments using third normal form schemas. But they are less frequent in data warehouses, where columns often have a low cardinality. Note that B-tree is the default index type – if you have created an index without specifying anything, then it’s a B-tree index.

Índices de mapa de bits

Los índices de mapa de bits se utilizan mejor en columnas de cardinalidad baja y, a continuación, pueden ofrecer ahorros significativos en términos de espacio, así como un rendimiento de consulta muy bueno. Son más eficaces en las consultas que contienen varias condiciones en la cláusula WHERE.

Tenga en cuenta que los índices de mapa de bits son particularmente lentos para actualizar.

Índices de unión de mapa de bits

Un índice de unión de mapa de bits es un índice de mapa de bits para la unión entre tablas (2 o más). Almacena el resultado de las uniones, y por lo tanto puede ofrecer grandes prestaciones en las combinaciones predefinidas. Está especialmente adaptado a los entornos de esquema en estrella.

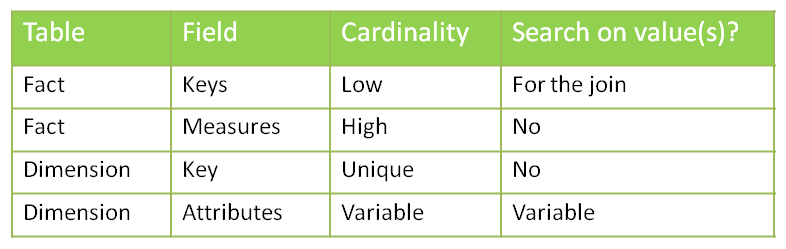

¿En qué tablas / campos usaremos qué índices?

¿Podemos poner índices en todas partes? No. Los índices vienen con costos (tiempo de creación, tiempo de actualización, almacenamiento) y deben crearse sólo cuando sea necesario.

Recuerde también que el objetivo es evitar lecturas completas de tabla - si la tabla es pequeña, entonces el optimizador de Oracle decidirá leer toda la tabla de todos modos. Por lo tanto, no es necesario crear índices en tablas pequeñas. Ya puedo oírte preguntar: "¿Qué es una mesa pequeña?" Una mesa de un millón de filas definitivamente no es pequeña. Una tabla 50 -row definitivamente es pequeña. ¿Una mesa de 4532 -crow? No estoy seguro. Vamos a ejecutar algunas pruebas y averiguar.

Antes de decidir sobre dónde usaremos los índices, analicemos nuestro esquema típico en estrella con una tabla de hechos y varias dimensiones.

Comencemos por ver la columna de cardinalidad. Tenemos un caso de unicidad: las claves primarias de las tablas de dimensiones. En ese caso, puede que desee utilizar un índice de árbol b para reforzar la unicidad. Sin embargo, si considera que el ETL que prepara las tablas de dimensiones ya se aseguró de que las claves de dimensión sean únicas, puede saltarse este índice (es todo acerca de su ETL y cuánto confía en él).

Entonces tenemos un caso de alta cardinalidad: las medidas en la tabla de hechos. Una de las principales preguntas que se deben hacer al decidir si aplicar o no un índice es: "¿Alguien va a buscar un valor específico en esta columna?" En este ejemplo he desarrollado asumo que nadie está interesado en saber qué cuenta Tiene un valor de 43453.12. Así que no hay necesidad de un índice aquí.

¿Qué pasa con los atributos de la dimensión? La respuesta es, depende". ¿Los usuarios van a hacer búsquedas en la columna X? Entonces usted quiere un índice. Elegirá el tipo basado en la cardinalidad: índice de mapa de bits para cardinalidad baja, árbol b para cardinalidad alta.

En cuanto a las claves de dimensión en la tabla de hechos, ¿va alguien a realizar una búsqueda en ellos? No directamente (sin filtros por las teclas de dimensión!), Pero indirectamente, sí. Cada consulta que une una tabla de hechos con una o más tablas de dimensiones busca claves de dimensión específicas en la tabla de hechos. Tenemos dos opciones para manejar esto: poner una clave de mapa de bits en cada columna o usar claves de combinación de mapa de bits.

Más consultas...

¿Son efectivos los índices? ¿Y qué hay del almacenamiento necesario? ¿Y el tiempo necesario para construir / refrescar los índices?

Vamos a hablar de eso la próxima semana en la segunda parte de mi puesto.