La Plataforma de BI 4 de SAP BusinessObjects (BO) ha venido con muchas mejoras que han hecho al sistema más complejo. Rep-Up-GraphWe, como los administradores de BI 4, están obligados a saber cómo funciona esta aplicación para aplicar las mejoras de optimización adecuadas a la plataforma, cuando sea necesario. En este artículo de blog, cubriremos los conceptos más importantes que deben considerarse como un primer paso para aumentar el rendimiento de la plataforma de BI. Es muy importante que un buen diseñador entienda los niveles conceptuales en la plataforma de BI: nivel de aplicación, nivel de inteligencia y nivel de procesamiento. Nos referiremos a cada uno de ellos y vamos a clavar algunos consejos de optimización.

Ejecución de SAP BusinessObjects Explorer en la parte superior de SAP BW

Running SAP Explorer on top of BW This blog describes the only successfully tested way to run SAP Explorer on top of SAP Netweaver Business Warehouse (SAP BW) info-providers. This is not a straight forward configuration and it requires knowledge of Information Design Tool, Data Federator Administration Tool and SAP Logon application for SAP BW.

Manejo de Errores de Fórmula en Web Intelligence

¿Sabe qué es lo que impulsa su negocio?

Conocer su negocio y tomar decisiones más inteligentes con el fin de mantenerse al día con los riesgos cotidianos involucrados en el funcionamiento de su negocio es fundamental para sobrevivir en la economía global de hoy.

Tomar decisiones efectivas requiere información. Esta información debe ser precisa y actualizada, y en el nivel de detalle adecuado que necesita para poder avanzar a una velocidad óptima. Análisis de negocio clave es lo que le permite dibujar información de los datos que recopila en las diferentes partes de su negocio. Cuando usted entiende exactamente lo que está impulsando su negocio, de dónde vienen las nuevas oportunidades y dónde se cometieron errores, puede ser proactivo para maximizar los ingresos existentes y revelar áreas de expansión. Las mejores decisiones se pueden tomar cuando usted tiene más visibilidad en el conocimiento vital que viene de su propia compañía.

Tomar decisiones efectivas requiere información. Esta información debe ser precisa y actualizada, y en el nivel de detalle adecuado que necesita para poder avanzar a una velocidad óptima. Análisis de negocio clave es lo que le permite dibujar información de los datos que recopila en las diferentes partes de su negocio. Cuando usted entiende exactamente lo que está impulsando su negocio, de dónde vienen las nuevas oportunidades y dónde se cometieron errores, puede ser proactivo para maximizar los ingresos existentes y revelar áreas de expansión. Las mejores decisiones se pueden tomar cuando usted tiene más visibilidad en el conocimiento vital que viene de su propia compañía.

Las soluciones SAP Business Intelligence (BI) proporcionan una ventana a su empresa. Un panel de control, por ejemplo, es una visión general única, confiable y en tiempo real de su empresa. Le ofrece una visión rápida, en formatos atractivos visuales que son fáciles de entender. También tiene pruebas de "qué si" que le permiten medir el impacto de un cambio en particular en el negocio. Esto también se puede hacer disponible en dispositivos móviles, por lo que puede tomar decisiones informadas on-the-move. Cuando tiene información en la que puede confiar, puede actuar rápidamente y mantenerse a la vanguardia del juego.

With years of expertise in BI, Clariba has helped several companies to draw insight from their data. For example, Vodafone Turkey´s Marketing department sought our help to provide the Customer Value Management Team with a dynamic and user-friendly visualization and analysis tool for marketing campaigns. With the central dashboard we delivered, the marketing team was able to analyze existing campaigns and design outlines for new ones based on key success factors.

You can learn more about SAP BI solutions here, and you can also watch SAP BI Solutions videos on YouTube. Want to unlock this information on what drives your business forward? Contact us on info@clariba or leave a comment below, and discover how SAP BI Solutions can help you achieve it.

BI y Social Media - Una combinación poderosa (Parte 2: Facebook)

To continue with my Social Media series (read the previous blog here BI and Social Media – A Powerful Combination Part 1: Google Analytics), today I would like to talk about the biggest social network of them all: Facebook. In this blog post, I will explain different alternatives I have recently researched to extract and use information from Facebook to perform social media analytics with SAP BusinessObjects’ report and dashboard tools. In terms of the amount of useful information we can extract to perform analytics, I personally think that Twitter can be as good or even better than Facebook, however, it has around 400 million less users. Facebook still stands as the social network with the most users around the world - 901million at this moment - making it a mandatory reference in terms of social media analytics.

Antes de comenzar a hablar sobre detalles técnicos, lo primero que debe entender es que Facebook está fuertemente enfocado en la experiencia del usuario, las aplicaciones de entretenimiento, el intercambio de contenido, entre otros. Por lo tanto, la actividad del usuario es más dispersa y variable en comparación con la moda ordenada en tiempo real que Twitter nos brinda, lo cual es muy útil al construir tendencias y análisis cronológicos. Por lo tanto, asegúrese de lo que está buscando, manténgase enfocado en sus indicadores clave y asegúrese de buscar algo que sea significativo y medible.

API de Facebook relevantes para fines analíticos

The APIs (Application Programming Interface) that Facebook provides are largely directed at the development of applications for social networking and user entertainment. However, there are several APIs that can provide relevant information to establish Key Indicators that can later be used to run reports. As Facebook’s developer page1 states: “ We feel the best API solutions will be holistic cross API solutions.” Among the API’s that you will find most useful (labeled by Facebook as Marketing APIs), I can highlight the Graph API, the Pages API, the Ads API and the Insights API. In any case, I encourage you to take a look at Facebook pages and guides for developers, it will be worth your time:

Marketing Developer Program

Marketing Developer Resources (with mentions of the APIs above)

Facebook Marketing Solutions: http://www.facebook.com/marketing

Aplicaciones de terceros para extraer datos de Facebook

Solo encontré algunas aplicaciones de terceros para extraer datos de la API de Facebook que eran lo suficientemente completas como para garantizar un acceso confiable a los datos. A continuación se presentan algunas alternativas diseñadas para este requisito:

GA Data Grabber: This application has a module for the Facebook APIs, which costs 500USD a year. As in the case of Google Analytics, it has key benefits such as ease-of-use and flexibility to make queries. It may also be integrated with some tools from SAP BusinessObjects such as WebIntelligence, Data Integrator or Xcelsius dashboards through LiveOffice.2

Custom Application Development: It is the most popular option, as I already mentioned in my previous post about Google Analytics. The Facebook APIs admit access from common programming languages, allowing to record the results of the queries in text files that can be loaded into a database or incorporated directly into various tools of SAP BusinessObjects.

Implementation of a Web Spider: If the information requirements are more focused on the user’s interactions with your client’s Facebook webpage or any of its related Facebook applications, this method may provide complementary information to that which is available in the APIs. The information obtained by the web spider can be stored in files or database for further integration with SAP BusinessObjects tools. Typically, web spiders are developed in a common programming language, although there are some cases where you can buy an application developed by third parties, as the case of Mozenda.3

Final Thought

Como mencioné en mi publicación anterior, en el área de las redes sociales aparecen nuevas aplicaciones y tendencias a un ritmo agitado, se espera que ocurran muchos cambios, por lo que es solo cuestión de tiempo hasta que tengamos más y mejores opciones disponible. Le animo a que tenga curiosidad por el análisis de las redes sociales y sus redes más populares, porque en este momento esta es una mina de oro de información en crecimiento.

If you have any questions or anything to add to help improve this post, please feel free to leave your comments.

Índices B-tree vs Bitmap: Consecuencias de la Indexación - Estrategia de Indexación para su Parte de Oracle Data Warehouse 2

On my previous blog post B-tree vs Bitmap indexes - Indexing Strategy for your Oracle Data Warehouse I answered two questions related to Indexing: Which kind of indexes can we use and on which tables/fields we should use them. As I promised at the end of my blog, now it´s time to answer the third question: what are the consequences of indexing in terms of time (query time, index build time) and storage?

Consecuencias en términos de tiempo y almacenamiento

Para abordar este tema utilizaré una base de datos de prueba con un esquema en estrella muy simplificado: 1 tabla de hechos de los saldos de las cuentas de Libro mayor y 4 dimensiones - la fecha, la cuenta, la moneda y la rama banco).

Para dar una idea del tamaño de la tabla, Fact_General_Ledger tiene 4,5 millones de filas, Dim_Date 14 000, Dim_Account 3 000, Dim_Branch y Dim_Currency menos de 200.

Supongamos aquí que los usuarios pueden consultar los datos con filtro en la fecha, código de sucursal, código de moneda, código de cuenta y los niveles 3 de la jerarquía de balance (DIM_ACCOUNT.LVLx_BS). Suponemos que las descripciones no se utilizan en los filtros, sino sólo en los resultados.

Aquí está la consulta que usaremos como referencia:

Seleccionar

d.date_date,

a.account_code,

b.branch_code,

c.currency_code,

f.balance_num

de fact_general_ledger f

unirse a dim_account a en f.account_key = a.account_key

join dim_date d en f.date_key = d.date_key

unirse a dim_branch b en f.branch_key = b.branch_key

unirse a dim_currency c en f.currency_key = c.currency_key

Dónde

A.lvl3_bs = 'Depósitos con bancos' y

D.date_date = to_date ('16/01/2012', 'DD / MM / YYYY') y

b.branch_code = 1 y

C.currency_code = 'QAR' - Vivo en Qatar ;-)

Entonces, ¿cuáles son los resultados en términos de tiempo y almacenamiento?

Algunas de las conclusiones que podemos extraer de esta tabla son:

Using indexes pays off: queries are really faster (about 100 times), whatever the chosen index type is.

En cuanto al tiempo de consulta, el tipo de índice no parece importar realmente para las tablas que no son tan grandes. Probablemente cambiaría para una tabla de hechos con 10 mil millones de filas. No obstante, parece existir una ventaja para los índices de mapa de bits y, especialmente, para los índices de combinación de mapa de bits (consulte la columna de costes del plan de explicación).

El almacenamiento es claramente a favor de los índices de combinación de mapa de bits y mapa de bits

El tiempo de construcción del índice es claramente a favor de b-tree. No he probado el tiempo de actualización del índice, pero la teoría dice que es mucho más rápido para los índices b-tree también.

Ok, estoy convencido de usar índices. ¿Cómo puedo crear / mantener una?

La sintaxis para crear índices b-tree y bitmap es similar:

Crear índice de mapa de bits Index_Name ON Nombre de tabla (FieldName)

En el caso de los índices b-tree, simplemente quite la palabra "Bitmap" de la consulta anterior.

La sintaxis para los índices de combinación de mapa de bits es más larga pero sigue siendo fácil de entender:

Crear índice de mapa de bits ACCOUNT_CODE_BJ

on fact_general_ledger (dim_account.account_code)

de fact_general_ledger, dim_account

donde fact_general_ledger.account_key = dim_account.account_key

Tenga en cuenta que durante su ETL, es mejor dejar / deshabilitar sus índices de combinación de mapa de bits / mapa de bits y volver a crearlos / reconstruirlos después, en lugar de actualizarlos. Se supone que es más rápido (sin embargo no he hecho ninguna prueba).

La diferencia entre drop / re-create y disable / rebuild es que cuando se deshabilita un índice, se mantiene la definición. Así que necesitas una sola línea para reconstruirla en lugar de muchas líneas para la creación completa. Sin embargo, los tiempos de generación del índice serán similares.

Para eliminar un índice: "drop index INDEX_NAME"

Para deshabilitar un índice: "alter index INDEX_NAME inutilizable"

Para reconstruir un índice: "alter index INDEX_NAME rebuild"

Conclusión

The conclusion is clear: USE INDEXES! When properly used, they can really boost query response times. Think about using them in your ETL as well: making lookups can be much faster with indexes.

Si desea ir más lejos, sólo puedo recomendarle que lea la Guía de almacenamiento de datos de Oracle. Para conseguirlo, sólo busque en Internet (y no olvide especificar la versión de su base de datos - 10.2, 11.1, 11.2, etc.). Es un documento bastante interesante y completo.

B-rbol vs índices de mapa de bits - Estrategia de indexación para su Parte de Oracle Data Warehouse 1

Some time ago we’ve seen how to create and maintain Oracle materialized views in order to improve query performance. But while materialized views are a valuable part of our toolbox, they definitely shouldn’t be our first attempt at improving a query performance. In this post we’re going to talk about something you’ve already heard about and used, but we will take it to the next level: indexes.

¿Por qué utilizar índices? Porque sin ellos usted tiene que realizar una lectura completa en cada tabla. Basta pensar en una guía telefónica: está indexado por su nombre, así que si te pido que encuentres todos los números de teléfono de personas cuyo nombre es Larrouturou, puedes hacerlo en menos de un minuto. Sin embargo, si te pido que encuentres a todas las personas que tienen un número de teléfono que comienza con 66903, no tendrás más remedio que leer el directorio telefónico completo. Espero que no tengas nada más planeado para los próximos dos meses o así.

Lo mismo ocurre con las tablas de la base de datos: si buscas algo en una tabla de hechos de filas multimillonarias no indexadas, la consulta correspondiente tomará mucho tiempo (y el usuario final típico no quiere sentarse 5 minutos Delante de su computadora esperando un informe). Si utilizó índices, podría haber encontrado el resultado en menos de 5 (o 1, o 0.1) segundos.

Voy a responder a las siguientes tres preguntas: ¿Qué tipo de índices podemos utilizar? ¿En qué tablas / campos los utilizaremos? ¿Cuáles son las consecuencias en términos de tiempo (tiempo de consulta, tiempo de construcción de índice) y almacenamiento?

¿Qué tipo de índices podemos usar?

Oracle tiene muchos tipos de índices disponibles (IOT, Cluster, etc.), pero sólo hablaré de los tres principales utilizados en data warehouses.

Índices de los árboles B

B-tree indexes are mostly used on unique or near-unique columns. They keep a good performance during update/insert/delete operations, and therefore are well adapted to operational environments using third normal form schemas. But they are less frequent in data warehouses, where columns often have a low cardinality. Note that B-tree is the default index type – if you have created an index without specifying anything, then it’s a B-tree index.

Índices de mapa de bits

Los índices de mapa de bits se utilizan mejor en columnas de cardinalidad baja y, a continuación, pueden ofrecer ahorros significativos en términos de espacio, así como un rendimiento de consulta muy bueno. Son más eficaces en las consultas que contienen varias condiciones en la cláusula WHERE.

Tenga en cuenta que los índices de mapa de bits son particularmente lentos para actualizar.

Índices de unión de mapa de bits

Un índice de unión de mapa de bits es un índice de mapa de bits para la unión entre tablas (2 o más). Almacena el resultado de las uniones, y por lo tanto puede ofrecer grandes prestaciones en las combinaciones predefinidas. Está especialmente adaptado a los entornos de esquema en estrella.

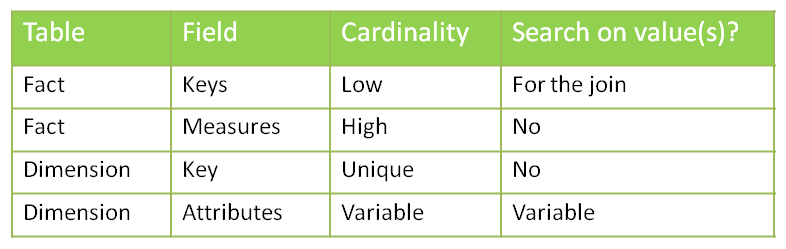

¿En qué tablas / campos usaremos qué índices?

¿Podemos poner índices en todas partes? No. Los índices vienen con costos (tiempo de creación, tiempo de actualización, almacenamiento) y deben crearse sólo cuando sea necesario.

Recuerde también que el objetivo es evitar lecturas completas de tabla - si la tabla es pequeña, entonces el optimizador de Oracle decidirá leer toda la tabla de todos modos. Por lo tanto, no es necesario crear índices en tablas pequeñas. Ya puedo oírte preguntar: "¿Qué es una mesa pequeña?" Una mesa de un millón de filas definitivamente no es pequeña. Una tabla 50 -row definitivamente es pequeña. ¿Una mesa de 4532 -crow? No estoy seguro. Vamos a ejecutar algunas pruebas y averiguar.

Antes de decidir sobre dónde usaremos los índices, analicemos nuestro esquema típico en estrella con una tabla de hechos y varias dimensiones.

Comencemos por ver la columna de cardinalidad. Tenemos un caso de unicidad: las claves primarias de las tablas de dimensiones. En ese caso, puede que desee utilizar un índice de árbol b para reforzar la unicidad. Sin embargo, si considera que el ETL que prepara las tablas de dimensiones ya se aseguró de que las claves de dimensión sean únicas, puede saltarse este índice (es todo acerca de su ETL y cuánto confía en él).

Entonces tenemos un caso de alta cardinalidad: las medidas en la tabla de hechos. Una de las principales preguntas que se deben hacer al decidir si aplicar o no un índice es: "¿Alguien va a buscar un valor específico en esta columna?" En este ejemplo he desarrollado asumo que nadie está interesado en saber qué cuenta Tiene un valor de 43453.12. Así que no hay necesidad de un índice aquí.

¿Qué pasa con los atributos de la dimensión? La respuesta es, depende". ¿Los usuarios van a hacer búsquedas en la columna X? Entonces usted quiere un índice. Elegirá el tipo basado en la cardinalidad: índice de mapa de bits para cardinalidad baja, árbol b para cardinalidad alta.

En cuanto a las claves de dimensión en la tabla de hechos, ¿va alguien a realizar una búsqueda en ellos? No directamente (sin filtros por las teclas de dimensión!), Pero indirectamente, sí. Cada consulta que une una tabla de hechos con una o más tablas de dimensiones busca claves de dimensión específicas en la tabla de hechos. Tenemos dos opciones para manejar esto: poner una clave de mapa de bits en cada columna o usar claves de combinación de mapa de bits.

Más consultas...

¿Son efectivos los índices? ¿Y qué hay del almacenamiento necesario? ¿Y el tiempo necesario para construir / refrescar los índices?

Vamos a hablar de eso la próxima semana en la segunda parte de mi puesto.

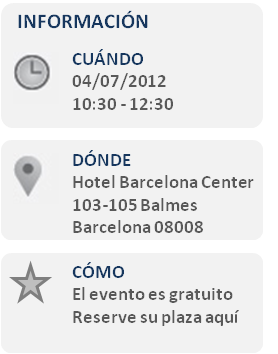

Asista al SAP Roadshow Innova Pyme 2012 con Clariba y com y Geinsa

Descubra cómo las aplicaciones de análisis de negocios de SAP pueden ayudar a acceder a información en la base de sus decisiones con seguridad y actuar para mejorar el rendimiento de su empresa.

Atienda a nuestro evento el 4 de Julio de 10:30 a 12:30 y descubra lo que su empresa puede lograr con las soluciones SAP.

En el SAP Roadshow Innova Pyme 2012 usted tendrá la oportunidad de hablar con los consultores de com&Geinsa y Clariba, partners de SAP con años de experiencia en soluciones de gestión de la información. Ellos le proporcionarán información sobre cualquier consulta que formule, o concretaremos el poderle ver con una atención más personalizado.

- Cafés y pastas de bienvenida

- Introducción

- Presentación de sap bi para businessone

- Demostración del cuadro de mandos para finanzas y ventas

- Preguntas y respuestas

- Agradecimientos y despedida

Esperamos verle próximamente en nuestro SAP Roadshow Innova Pyme 2012. El evento es gratuito, para registrarse por favor HAGA CLIC AQUÍ.

Un cordial saludo,

Marc Haberland Director General Clariba

_______________________________________________________________

Acerca de clariba

Clariba ofrece soluciones innovadoras y fiables en business intelligence, proporcionando a clientes en más de 15 países la visión que necesitan para mejorar su rendimiento empresarial. Nuestros consultores son certificados y expertos en la planificación, desarrollo y instalación de SAP BusinessObjects. www.clariba.com

Acerca de com y Geinsa

com&Geinsa lleva más de 30 años aportando soluciones para rentabilizar al máximo los sistemas de información de su empresa, sin perder de vista la evolución de la tecnologías como movilidad y cloud. En com&Geinsa somos expertos en hacer de SAP una solución única para su empresa. www.comgeinsa.com

¿Qué te mantiene despierto en la noche? Gestión de la Información Pesadillas

Clariba recently carried out a survey about information management with participants at an event. The results were quite interesting since we had most participants mentioning the same pain points; therefore we have decided to share them in this article. To carry out the survey, we used simple statements that people could identify themselves with - divided into two areas: Business and Technical. We then asked participants to fill a one-page questionnaire, asking them to check all the pain points they were facing (they could check multiple pain points).

Puntos de dolor de negocios

Cuando se trataba del lado comercial, el 45% de los encuestados afirmó que su jefe los empuja constantemente para obtener más información. Este es un tema muy delicado. Todos sabemos que las empresas no pueden darse el lujo de tomar decisiones que no se basan en hechos. Por lo tanto, si usted es el responsable de recopilar estos hechos, estará bajo una presión constante. Sería algo sencillo de tratar si fuera fácil reunir la información que necesita. Sin embargo, cuando vemos que el 35% de los participantes se quejó de que están dedicando demasiado tiempo a consolidar la información y el 30% no logró obtener toda la información que necesitan, entendemos por qué esto se convierte en un gran problema. Las plataformas de inteligencia empresarial no solo deberían proporcionarle toda la información que necesita, sino que deberían hacerlo fácilmente disponible. Puede ser una tarea desalentadora cuando tiene que esperar a que TI le devuelva consultas para una pregunta comercial que tiene que responder o cuando tiene que comparar 3 diferentes fuentes de datos para descubrir el verdadero valor antes de que tenga lugar una reunión 1 hora a partir de ahora.

Otra insatisfacción común fue la obsolescencia de los datos. 40% dijo que sus sistemas de BI solo proporcionan datos históricos y que no pueden ver cómo está funcionando la compañía en este momento. Esto puede ser causado por no tener un almacén de datos adecuado en su lugar, o tener uno que no se actualice tan a menudo como debería o tener procesos ETL mal diseñados. Cualquiera sea la razón, puede conducir a una mala toma de decisiones. Cuando las empresas se mueven a la velocidad de la luz (en un centro de llamadas, por ejemplo), confiar en información antigua puede ser engañoso.

Otro problema relacionado con la obsolescencia de datos apareció cuando 40% dijo que les gusta Microsoft Excel como una herramienta para manipular información, pero que no están obteniendo datos nuevos. Si tiene que preparar presentaciones o enviar correos electrónicos que incluyen datos de Microsoft Excel, y tiene que actualizarlos manualmente, no solo pasará mucho tiempo en ello, sino que también estará sujeto a cometer errores. Las herramientas de inteligencia empresarial deben permitir a los usuarios optimizar su tiempo, al tiempo que reducen la mano de obra y minimizan los errores.

Puntos técnicos de dolor

En el aspecto técnico de nuestra encuesta, la queja más común fue sobre la carga de trabajo relacionada con la gestión de la información. El 55% de los participantes declaró que su departamento está completamente sobrecargado. Esto también está relacionado con otra queja popular: 45% de los encuestados afirma que tienen usuarios del sistema que los llaman todo el tiempo. Si los usuarios no tienen la autonomía para crear sus propios informes y necesitan TI para cada consulta que tienen que ejecutar, el departamento se convertirá en un cuello de botella. Es por eso que es tan importante ofrecer a los usuarios empresariales herramientas fáciles de usar que les den la libertad que necesitan para obtener información de los datos corporativos por sí mismos, ya que acelera el proceso de intercambio de información.

Otro punto problemático mencionado con frecuencia en el aspecto técnico es que el 40% de los encuestados no obtienen el soporte que necesitan para su sistema de BI. Cuando ocurre un problema en la plataforma de BI, el impacto en una empresa puede ser enorme, ya que las personas toman sus decisiones sin datos confiables que los guíen. Por lo tanto, el soporte adecuado es vital para la función correcta de una empresa, tanto en las licencias de SAP como en las soluciones de BI implementadas.

Finalmente, el 35% de los encuestados no tiene la visibilidad de cómo se utilizan sus sistemas de BI. Cuando TI no tiene idea de cómo se está utilizando el sistema, no pueden optimizar el servidor y las licencias, no pueden entender a sus usuarios y no pueden ser proactivos, pronosticando cuándo se necesitarán cambios. Deben ser capaces de comprender lo que sucede en sus aplicaciones de BI y garantizar que los entornos de BI funcionen adecuadamente.

¿Cómo resolver estos problemas?

Ya sea que use Microsoft Excel, un sistema de BI heredado o una solución de SAP BI, Clariba puede ayudarlo a resolver los problemas de administración de datos que enfrenta a diario. Las soluciones de Clariba, que se basan en SAP BusinessObjects, la plataforma de BI líder en el mercado actual, pueden ayudar a los usuarios empresariales y los departamentos de TI a resolver sus inquietudes.

With the full range of SAP BusinessObjects BI modules we are able to provide companies with the solutions they need. We are also capable of developing optimal ETL processes and Data Warehousing with our Data Management services to make sure you have high quality data analysis.

Furthermore, we can ensure your systems are performing to their maximum capacity. Our Support Center has been certified by SAP to provide support on SAP BusinessObjects licenses and also on BI systems and implementation. If what you are looking for is to monitor your BI system, we have recently developed PerformanceShield, a solution that ensures an effective management of your Business Intelligence (BI) system, which is critical to guarantee your organization is optimizing its investments on BI.

Not sure where to begin? The first step is a 360 BI Assessment, based on best practices and an in-depth understanding of your BI platform, tools, content and licenses. If your current BI system has any gaps or challenges, SAP BusinessObjects and Clariba will recommend steps for making it more secure, stable or efficient for all your users and audiences.

Si siente alguno de los puntos débiles mencionados, o cualquiera de los otros que aparecen en los gráficos, comuníquese con Clariba y descubra cómo puede aprovechar al máximo sus inversiones en inteligencia de negocios.

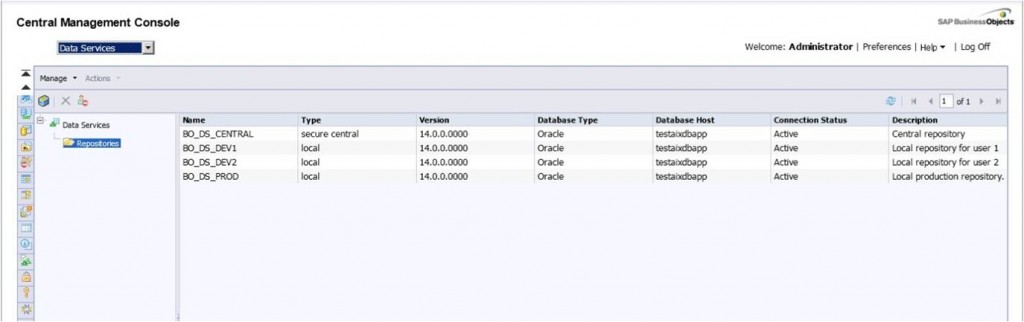

Working with Data Services 4.0 repositories

On my previous blog, “Installing Data Services 4.0 in a distributed environment” I mentioned there was an important step to be carried out after installing data services in a distributed environment: configure the repositories. As promised, I will now walk you through this process. Data Services 4.0 can now be managed using the BI Platform for security and administration. This puts all of the security for Data Services in one place, instead of being fragmented across the various repositories. It means that Data Services repositories are managed through the Central Management Console. With Data Services 4.0 you will be able to set rights on individual repositories just like you would with any other object in the SAP BusinessObjects platform. What is particularly interesting about managing Data Services security using CMC is that you can assign rights to the different Data services repositories.

Now you can log into Data Services Designer or Management Console with your SAP BusinessObjects user ID instead of needing to enter database credentials. Once you log into Designer, you are presented with a simple list of Data Services repositories to choose from.

I advice anyone who is beginning to experiment with Data Services to use the repositories properly from the start! At first it might seem really tough, but it will prove to be very useful. SAP BusinessObjects Data Services solutions are built over 3 different types of meta-data repositories called central, local and profile repository. In this article I am going to show you how to configure and use the central and the local repository.

The local repositories can be used by the individual ETL developers to store the meta-data pertaining to their ETL codes, the central repository is used to "check in" the individual work and maintain a single version of truth for the configuration items. This “check in” action allows you to have a version history from which you can recover older versions in case you need it.

Now lets see how to configure the local and central repository in order to finish the Data Services’ installation.

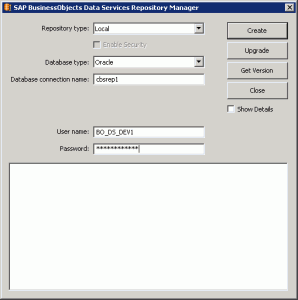

So, let’s start with the local repository. First of all, go to Start Menu and start Data Service Repository Manager Tool.

Choose “Local” in the repository type combo box. Then, enter the information to configure the meta-data of the Local Repository.

For the central repository chose central as repository type and then enter the information to configure the meta-data of the Central Repository. Check the check box “Enable Security”.

After filling in the information, press Get Version in order to know if there is connection with the data base. If there is connection established, press Create.

Once you have created the repositories you have to add them into the Data Service Management Console. To do that log in DS Management Console. You will see the screen below.

This error is telling you that you have to register the repositories. The next steps is to click on Administrator to register the repositories.

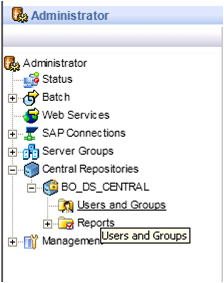

On the left pane, click on Management list item. Then click on Repositories and you will see that there are no repositories registered on it. In order to register them, click the Add button to register a repository in management console.

Finally, write the repository information and click Test to check the connection. If the connection is successful, click apply and then you will be able to see the following image which contains the repositories that you have created. DO NOT forget to register the central repository.

After that, the next logical step is to try to access Data Services using one of the local repositories. Once inside the Designer, activate the central repository that you have created. Data services will display an error telling you that you do not have enough privileges to activate it.

To be able to active the central repository you have to assign the security. To do that go to Data Services Management Console. In the left pane go to “Central Repository” and click on “users and groups”.

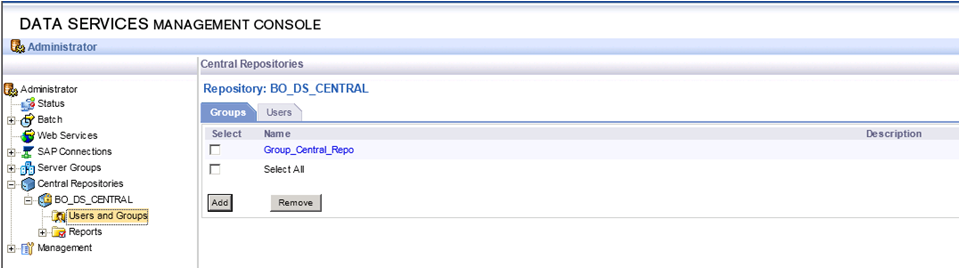

Once you are inside Users and Groups, click “add” and create a new group. When the group is created select it and click on the “users” tab which is on the right of the group tab (see the image below).

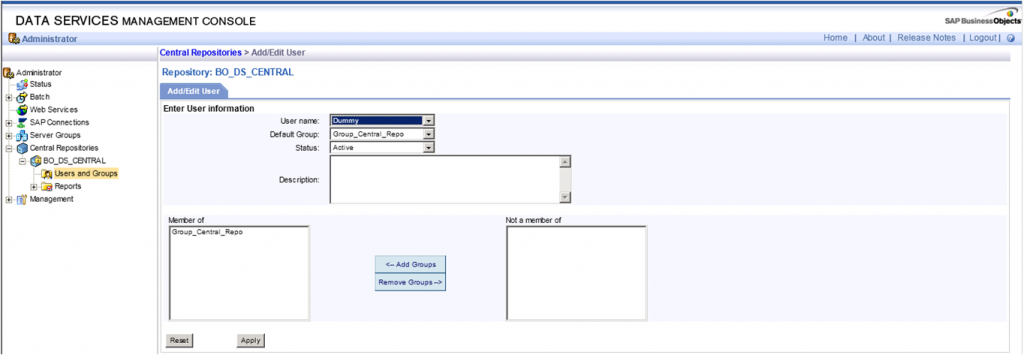

Now you can add the names of the users that can activate and use the central repository in the designer tool. As you can see in the image below the only user that you added in the example is the “Administrator”.

Now the last task is to configure the Job Server to finish the configuration.

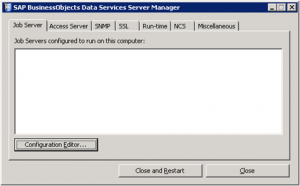

As always, go to Start Menu and start Server Manager Tool. Once you start the Server Manager the window below appears.

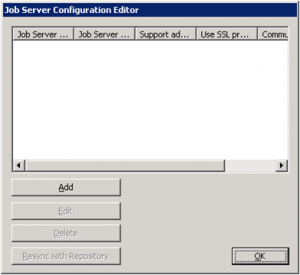

Press Configuration Editor a new window will be opened. Press Add and you will have a new job server.

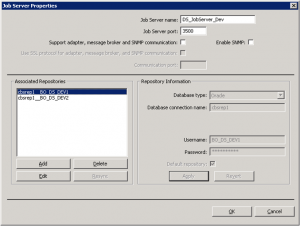

Keep the default port and choose a name or keep the default one for the new Job server. Then press Add to associate the repositories with the job server created.

Once you press “Add” you will be able to add information in the right part of the window “Repository Information”. Add the information of the local repositories that you have created in the previous steps.

At the end you will see two local repositories associated.

With this step you have already finished the repository configuration and are now able to manage the security from the CMC and use the regular BO users to log into the designer and add security to the Data Services repositories like you do with every BusinessObjects application.

If you have any questions or other tips, share it with us by leaving a comment below.