Tu entorno actual de SAP BusinessObjects es clave para tu organización. Sin embargo, hemos detectado que algunos de nuestros clientes están manteniendo una gran cantidad de licencias a las que no están sacando todo su potencial. Es hora de revisar este entorno y convertir esas licencias no utilizadas a SAP Analytics Cloud.

Moving to the Cloud? Evaluating a New Data Warehouse to Replace SAP HANA?

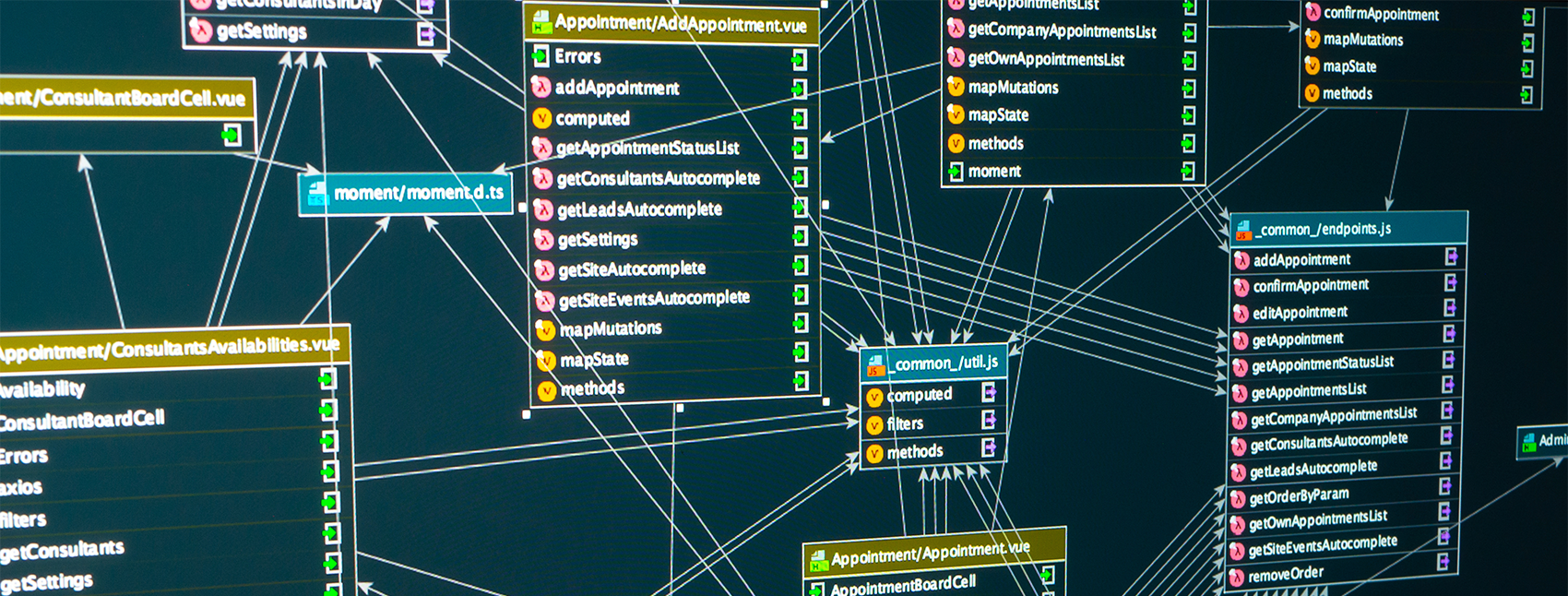

Migrating to a new database system can be overwhelming, especially when considering that there are many components to update and execute. If you are looking to make the move from Savia HANA to another technology or to simply extend your analytic components outside the SAP HANA Views area, then chances are you will need some help with the tables, graphical views and content.

Qlik Replicate: A flexible data replication solution for just in time insights from your SAP Systems

SAP HANA Cloud Series Parte 6: Cómo aplicar Analytics Privileges

SAP HANA Cloud Series Part 5 – How to Improve Calculation

SAP HANA Cloud Series Part 4 – How to Build Up a Federated Scenario (

SAP HANA Cloud Series Part 3 – How to Build Up a Federated Scenario (I

En esta sesión, aprenderá a crear y administrar usuarios, incluida la creación de roles, certificados, fuentes remotas, etc. Los pasos que seguiremos en este artículo son fundamentales antes de continuar con la serie, ya que es vital que su configuración sea correcta para poder trabajar con artefactos de base de datos contenidos en una instancia externa.

SAP HANA Cloud Series Parte 2: cómo definir tablas y roles

SAP HANA Cloud Series Parte 1: cómo configurar el entorno

Cada vez más organizaciones se dan cuenta del papel clave que desempeña el análisis de datos para mantener una ventaja competitiva y cumplir sus objetivos estratégicos. Pero a medida que crece el volumen de datos, aumenta la presión sobre los presupuestos y la necesidad de análisis en tiempo real para permitirla toma de decisiones basada en datos se vuelve cada vez más crucial, las soluciones de gestión de datos en las instalaciones luchan por hacer frente.

Libere el poder de su base de datos SAP HANA: obtenga información valiosa a través de las bibliotecas integradas de aprendizaje automático y predictivo

Prediction models are trending globally, and are now more widely used than ever before. If you’re looking to take advantage of this technology to better understand your data, don’t worry, our experts are right here to support you, whether you are an existing SAP HANA customer or you are planning to move to SAP HANA soon.